Tree structures (and some graphs) embodies structured and semi-structured data (XMLs, HTMLs, LaTeX, diagnostic trees, etc) in hierarchical form. They often contain labels, attributes, important structural, hierarchy, weight and relational information. These additional data dimensions (e.g. hierarchy, structural, weight, etc) represented by tree structures are extremely valuable in many use cases and present opportunities to increase ROI of business data. Reasons why tools and functions deployed for processing or analysing tree structures must be able to exploit its native form (hierarchical) to prevent information and context loss during consumption.

In this 2 part blog, we share some useful tips, steps and lessons learned from processing tree data for analysis and ML based data mining. In part 1, we discuss some of the steps taken to prepare and model the tree data using python, duckdb and Malloy. Python is a ubiquitous scripting language with a large set of libraries for building complex tools and applications. Duckdb is an amazingly easy to use columnar DBMS for analytics with a rich sql dialect, client APIs to both Python and Malloy. Malloy makes data modelling, transformation, analysis, visualisation and analytic coding effortless. Moving data out of the Malloy model is as simple as firing a Malloy query and transforming the relation into a dataframe, which can be consumed for ML and charting.

Together the 3 has become a valuable combo for many when accomplishing complex data projects. Part 2 which will be posted later, will centre more on data mining techniques for measuring similarity, clustering and regression. For additional background information on some of the functions discussed here and understand notions behind the design, please refer to my previous blog @ ‘Mindful Geek’.

2.0. Source of Trees

The demo sample is a XML from a public API.. We also repurposed previously shared playground assets in Worlds of Data and Mindful Geek to extract XMLs from public APIs and capture parent, child, attribute and tag information from those sources.

3.0. What to analyse?

While many data projects do emerge from a business need or question - which means there is a clear idea on what insight should be extracted from a data source, an opportunity to extract more value from any accessible data source (beyond the business question) should not be missed. This is because the true value of data lies in explaining the unexplainable (or questions never asked) events in a business or operation. If we were to lend the ‘Maslow’s Pyramid’ as a reference for values, this type of discoveries will fall in the topmost section of the pyramid. And the topmost section often translates to high value differentiators.Which makes building hypotheses and conducting data experimentations with various cross functions a necessity.

4.0. Some Popular Data Mining techniques for Tree Structures

Depending on the challenges or business question, it is best to deploy a combination of data mining techniques for best outcome. Some methods skew towards capturing the structural information, while others the labels and contents of the tree or some may constitute hybrid approaches. Here are 5 most popular data mining techniques for trees for consideration:

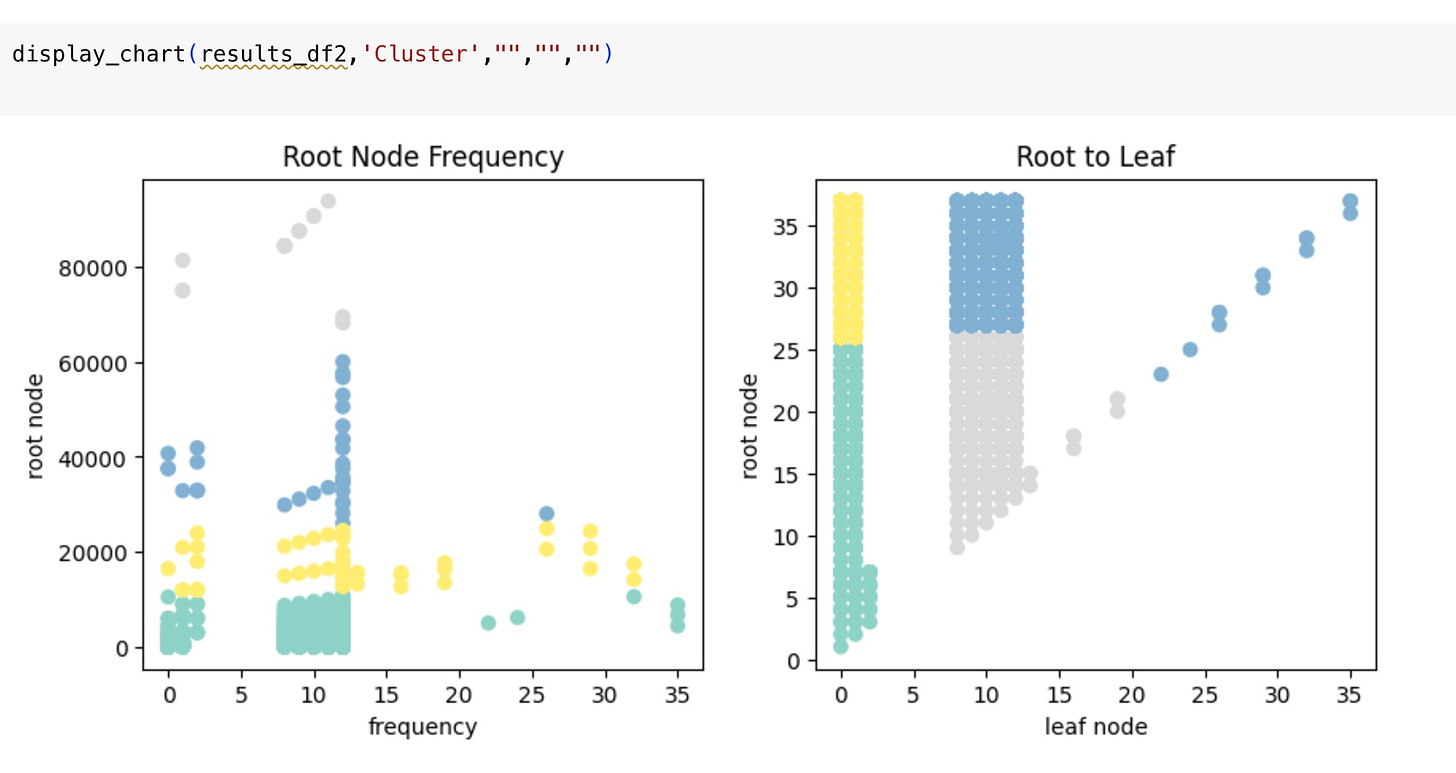

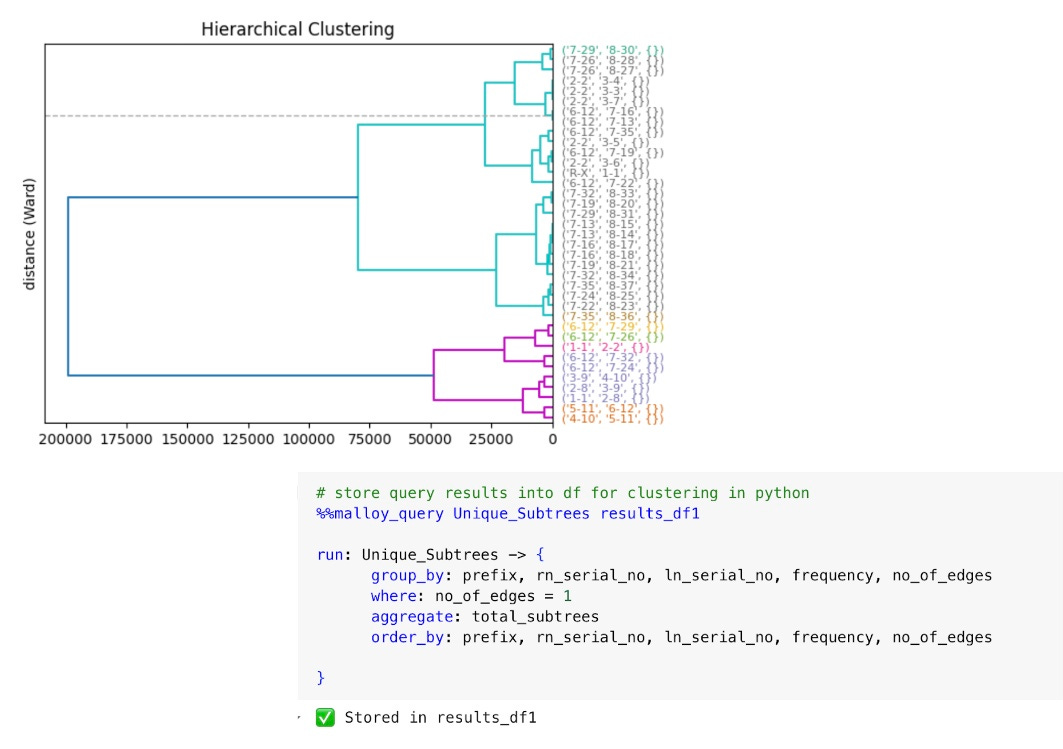

4.1. Hierarchical Clustering.

This method builds a hierarchy of clusters. It’s also widely known as a dendrogram which represents the nested clusters, where each node is a cluster that contains all the clusters below it as subclusters. Agglomerative and divisive clustering are two main approaches under this technique. Agglomerative starts with each data point as a separate cluster and merges them based on similarity, while divisive starts with all data points in one cluster and splits them into smaller clusters.

4.2. Subtree Pattern Mining in Trees.

Techniques such as frequent subtree mining aim to discover recurring patterns within tree structured data. Examples include the TreeMiner algorithms, which can efficiently find frequently connected subgraphs in a database of labelled graphs. These methods are especially useful in XML document analysis.

4.3 Tree Kernels.

Tree kernels are a class of kernel functions used in kernel-based learning algorithms (e.g., Support Vector Machines) to measure the similarity between tree structures. They recursively decomposed trees into substructures such as subtrees or subpaths (vertical structure) and compute a similarity score based on the matches of these substructures. Tree kernels are particularly useful in natural language processing for comparing parse trees or in computational biology for comparing phylogenetic trees.

4.4. Decision Trees.

Decision Trees are ML algorithms used for classification and regression tasks. The tree structure represents the hierarchical nature of the decision-making process. Algorithms like C4.5, CART (Classification and Regression Trees), and ID3 (Iterative Dichotomiser 3) are widely used for inducing decision trees from data. They recursively partition the data based on the attributes that best split the data, leading to a tree structure that can be used for prediction.

4.5 Tree-Based Ensemble Methods.

These ensemble learning methods use multiple decision trees and aggregate their results to improve prediction accuracy. For instance, Random Forests build a large number of decorrelated decision trees by using bootstrap samples of the data and random subsets of features. Gradient Boosting Trees, on the other hand, sequentially build trees that predict the residuals or errors of prior trees, improving the overall model in a gradient descent fashion. Both methods leverage the tree structure to enhance predictive performance.

Each technique has its strengths and is suited to different types of challenges involving data inherently structured as trees or where tree structures are effectively leveraged for an analysis.

5.0. The Process

There are 6 key steps in preparing XML tree data for analysis and visualisation.

5.1. 1st Step - some ideas on data dimensions and measures required down the line.

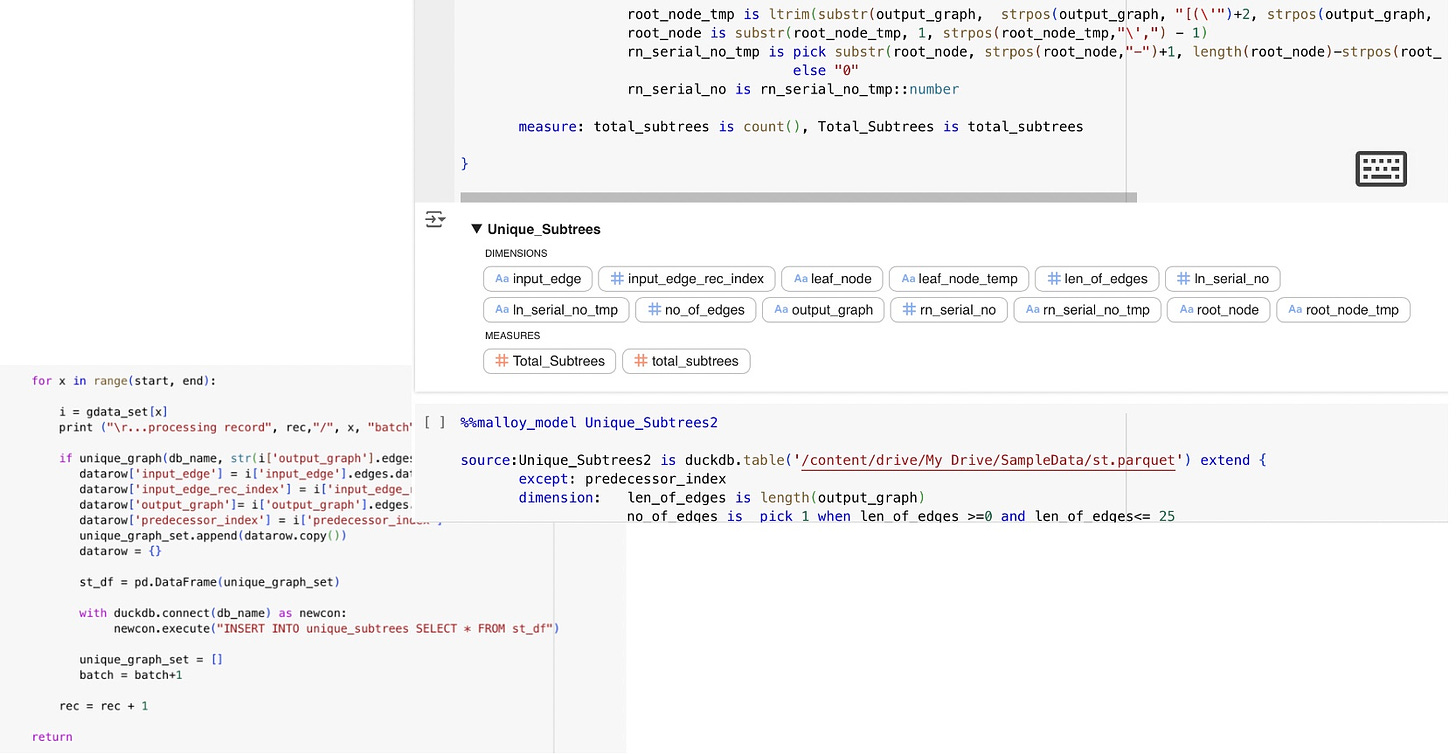

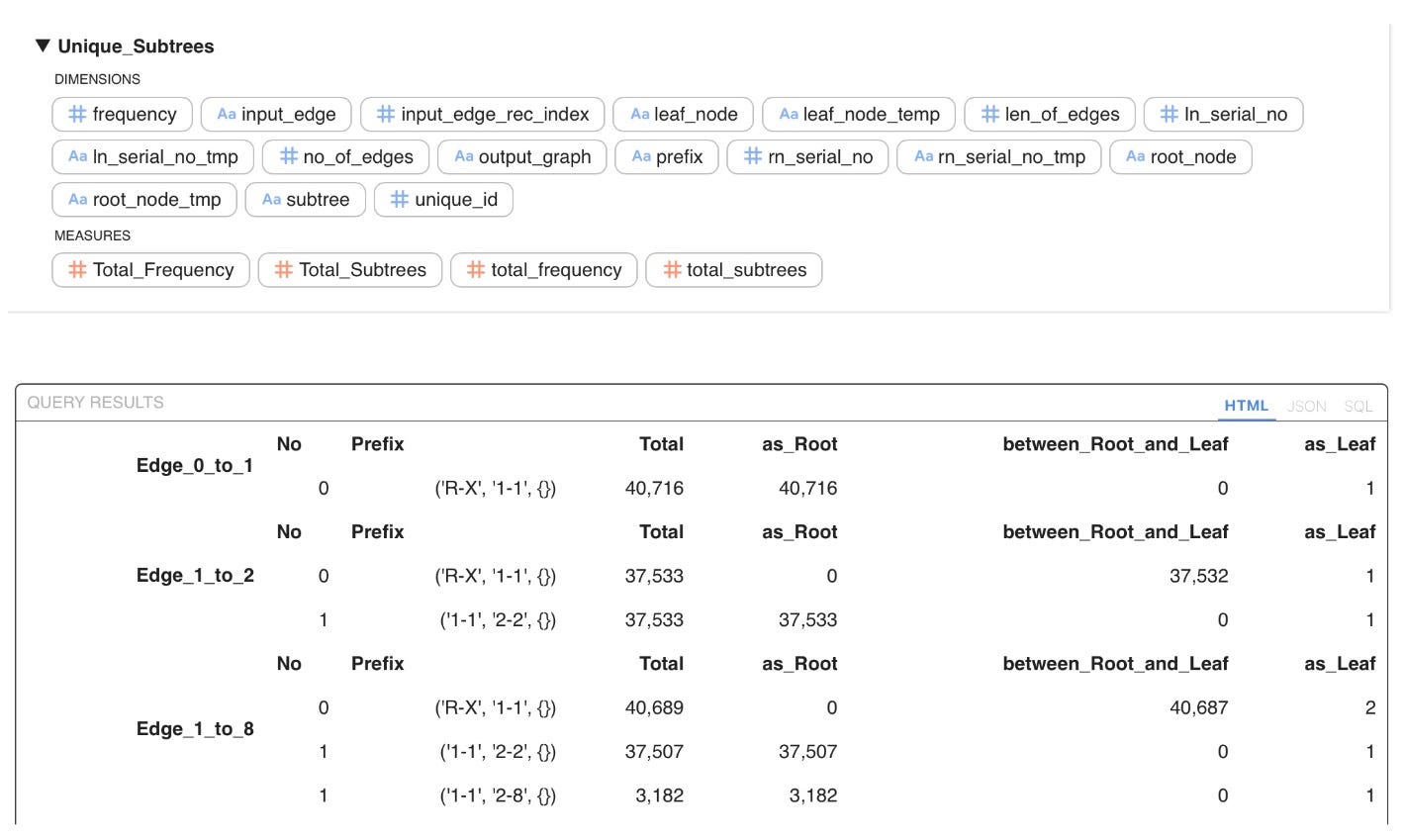

The first step is to think about some of the high level data dimensions and measurables required for various analysis down the road. Some dimensions picked for this example are the i) subtree, a graph object; ii) node serial number, a numeric type; iii) node/edge labels, a string type; iv) number of nodes, a numeric type; v) number of edges, a numeric type; vi) frequency of a subtree in the tree, a numeric type; vii) and a unique numeric ID for each subtree. Measures such as total subtrees and frequency of a subtree (number of times a subtree appears in a tree) are both critical to validate correctness of subtree sets and apply kernel methods at later stages.

5.2. 2nd Step - figuring the solution components and high level architecture.

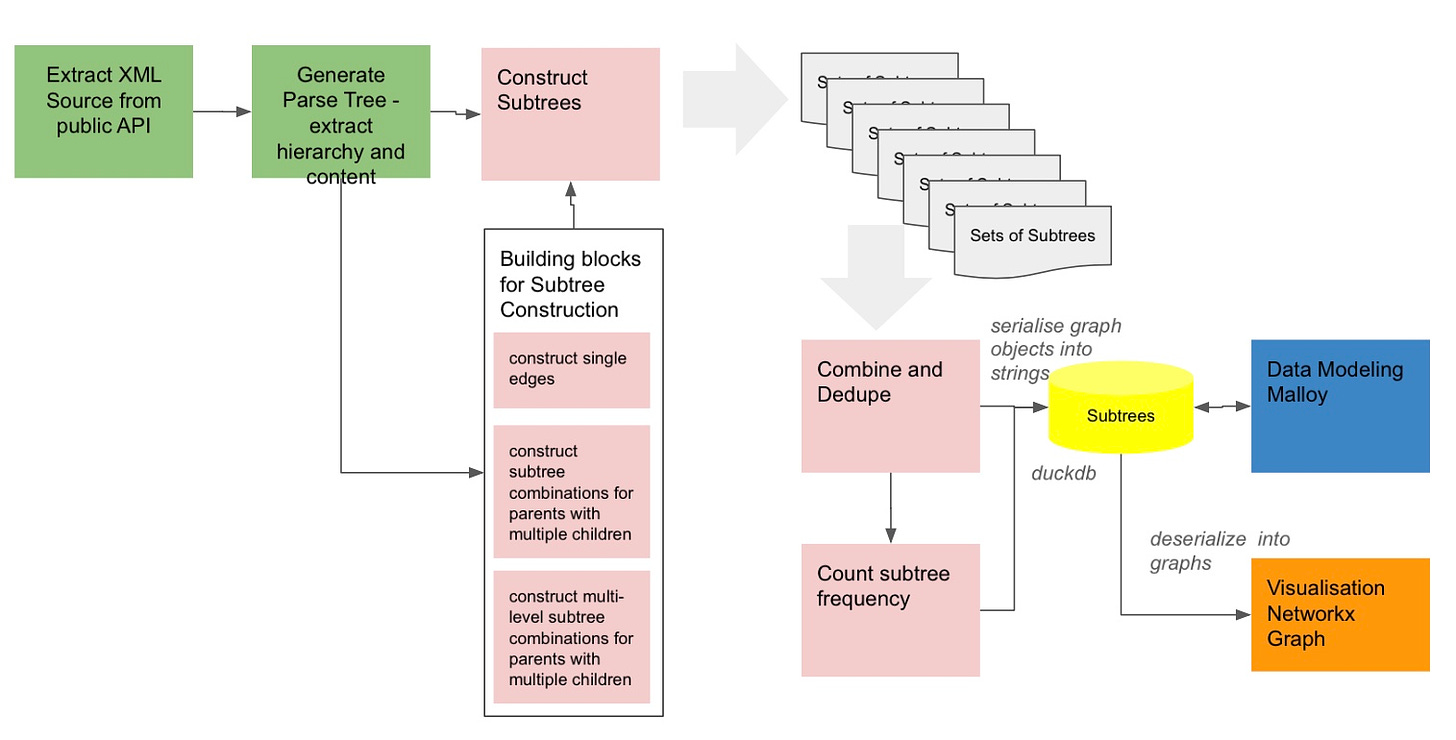

As aforementioned, in this example, XMLs are the source of tree structures. Parent, child and attribute data extracted using the XML parser is piped into a graph constructor to build basic subtree building blocks (graph objects such as single edges, multi child combinations, etc). The subtree building blocks serve as the foundation to reconstruct all subtree combinations of the tree. The output is a set of subtrees (table) stored in a columnar database and persisted in a parquet file. Additional columns are computed post processing and updated directly to the duckdb. Finally data is modelled in Malloy for various transformation, analysis and visualisations. In addition, Maloy models and queries pipes data to python/ pandas dataframe for ML and 3rd party visualisation tools.

The focus of the solution design is to i) extract XML data from a public source, ii) generate a parse tree to extract element data,iii) generate 3 types of subtree building blocks as graph objects, iv) construct subtrees (graph objects), v) combine and dedupe all subtree sets generated, vi) store subtrees in duckdb and parquet, vii) count number of time a subtree appears in the tree, viii) and finally model data in Maloy for analysis and use.

Each step contributes data artefacts used in the next step as shown in diagram 3 above.

5.3. 3rd Step - Identifying suitable tools for the job.

In every task, potentials for simplification, reusability and repurposing of existing tools is crucial. For example, in this experiment python is used to build various functions to construct subtrees and pre-process data (click for code on colab) with the aid of libraries such as i) ElementTree for XML parsing (click for code on colab); ii) Networkx for graph construction and visualisation; iii) duckdb for storing and persisting processed data; iv) Malloy for data modelling, analytic queries, visualisation and transformation (click for code on colab). The use of Python, SQL and Malloy are seamless across the solution, each helps developers use the right tool for tasks.

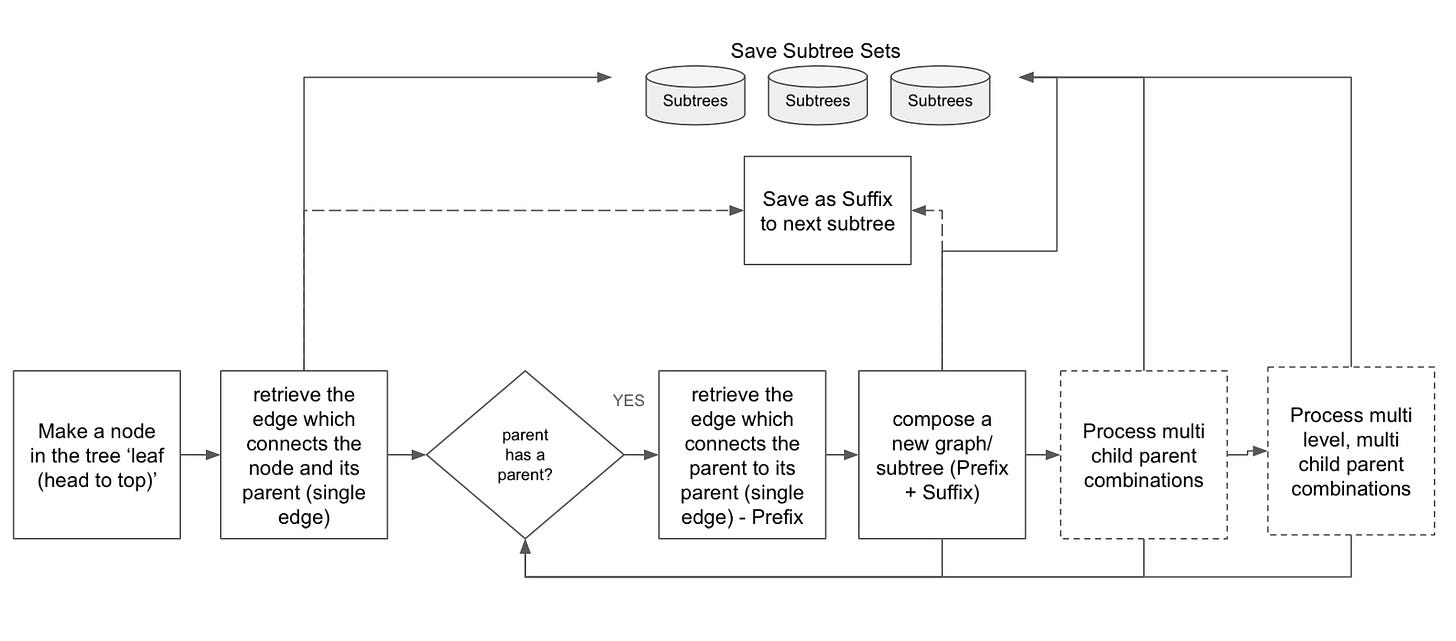

5.4. 4th Step - building new functions - the ‘Subtree Constructor/Extractor’.

One key function in preparing tree data is the function used to construct subtrees as Networkx graph objects. There were 2 approaches considered to obtain the subtrees: i) first approach is to extract the subtree from a full graph by taking top down ‘tree walks’ and capture the path as subtree; ii) the second approach is to iteratively turn every node in the tree into leaf, from top down direction and reconstruct the substrees one ascendant at a time. In both approaches, each node will generate a set of subtrees with increasing height and size as they reach the ultimate root in the tree.

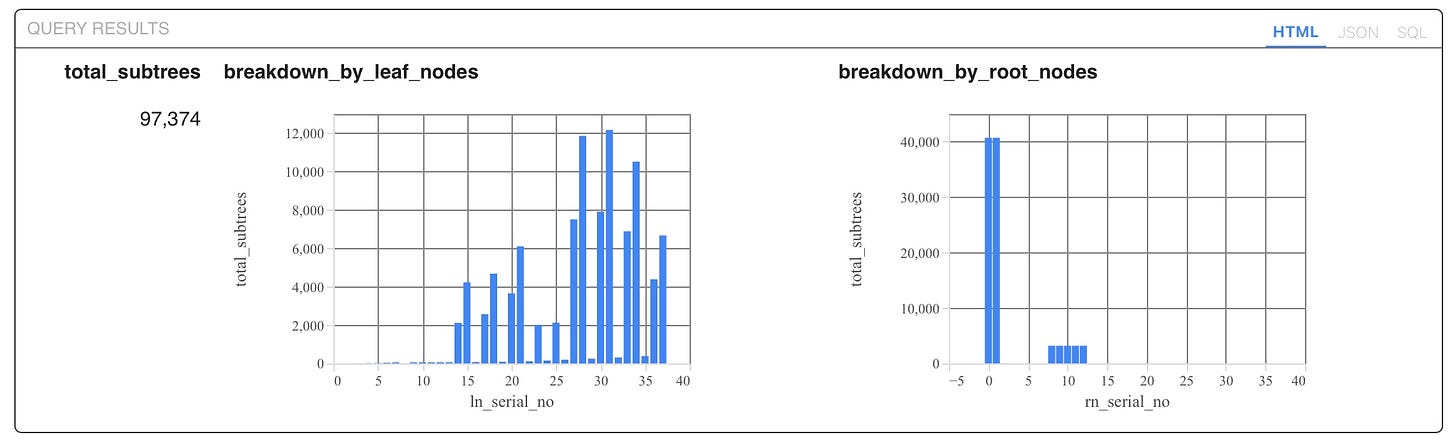

In this example we used the second approach and generated over 97 K of subtrees.

The custom function detects parent, predecessors and their multi child combinations during subtree construction . Every tree-walk from every ascendant node and tree level outputs a unique subtree. Due to the various patterns in the tree (e.g binary, ternary, k-nary, hybrid) we used 3 different modules to process the variations, combined and de-duped the sets before persisting into duckdb and parquet. Currently, the function has a limitation where it expects multi-child nodes of the same level to have a seamless pattern to work correctly. For instance, in the sample we used here, node 22 and 24 both have only 1 child while their other siblings have 2, causing the constructor to miss them in some combinations. Hence we have 2 missing sets of subtrees.

This is not a huge setback for our current use case, though we intend to rebuild the function in the next iteration into a generic which seamlessly facilitates various tree patterns together.

5.5. 5th Step - Execute and generate subtree sets.

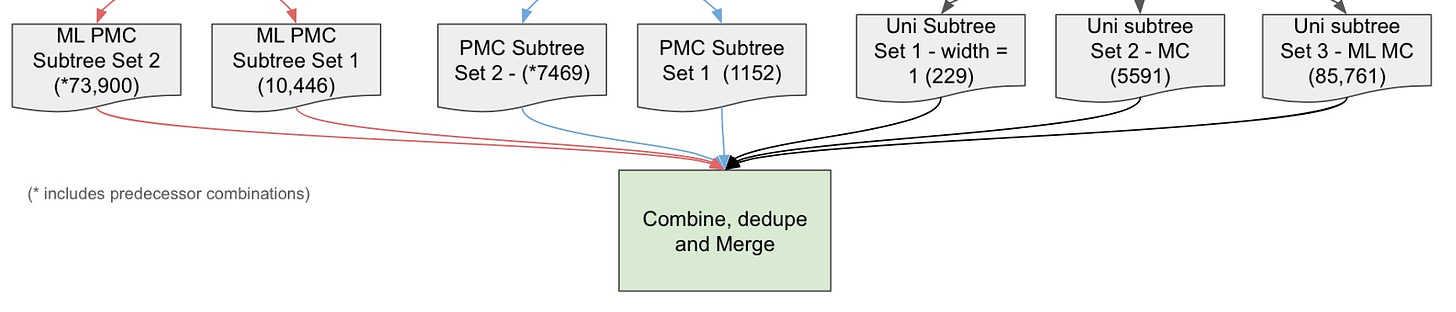

The demo XML sample had 37 nodes in a tree with height 8. The custom function generated 7 sets of subtrees as shown in diagram 7 below. Some large sets take a couple of minutes to complete if on a single machine.

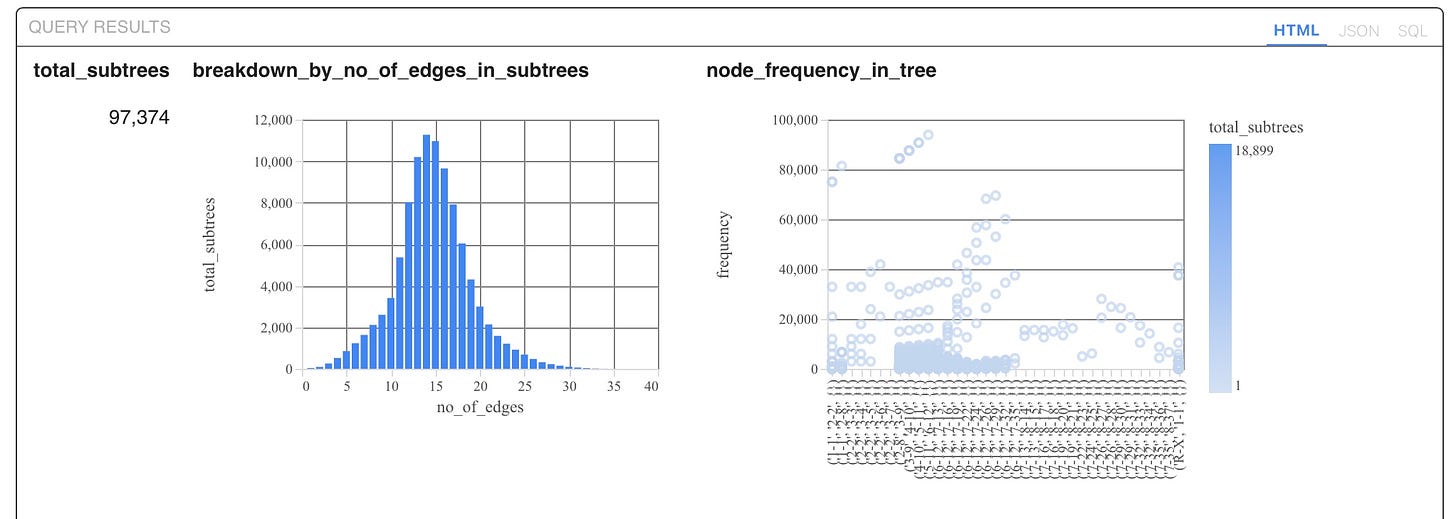

When combined and duplicates removed, the final set had over 97,300 unique subtrees written into a duckdb table and a parquet file. Some combinations involving the 2 irregular nodes (node 22, 24) were missing as mentioned earlier. This step was particularly time consuming and resource intensive especially when multiple large XMLs are involved.

Upon completing the subtree preprocessing step, a new metric - frequency of subtrees in tree computed and updated into the table.

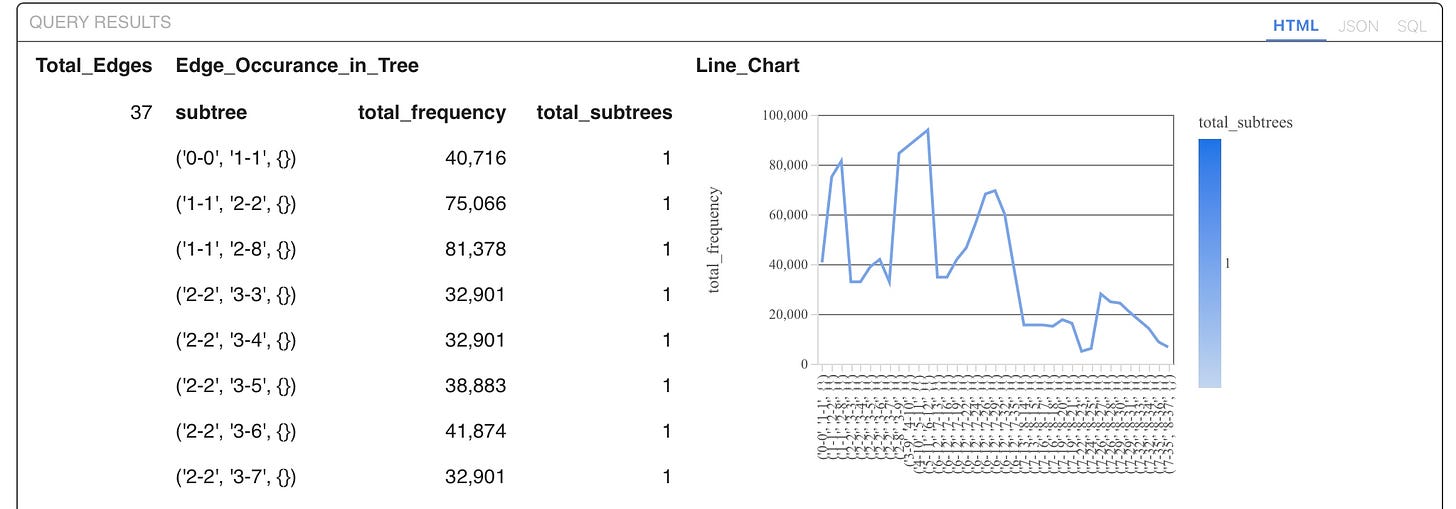

5.6. 6th Step - Model and Visualise

The last step is to model data and build all queries in Malloy. Malloy has built in features to turn queries into pretty line, bar or scatter charts. Malloy can also store query results in a dataframe to facilitate conversion into external tables, charts and execute ML functions when needed.

Conclusion

The subtrees used for modelling are in serialised form in duckdb/parquet, as it’s much faster to search a string than a graph object at this scale. Nevertheless, the string forms can easily be deserialized using Networkx by just passing the subtree strings containing nodes, edges and attributes to a Networkx graph object.

Like to supercharge your data project ? Feel free to leave me a note here!

References:

Modelling Data and Building Queries with Malloy (click for code on Google Colab)

Python Subtrees Constructor (click for code on Google Colab)